AI agents are rapidly changing the shape of the internet. What started as an effort to keep bots out is quickly becoming a much more complex challenge: distinguishing humans from machines, enabling safe automation, and doing all of it without forcing users to overshare their identity.

Against this backdrop, “proof of human” is moving from a niche concept to a foundational requirement for many digital experiences.

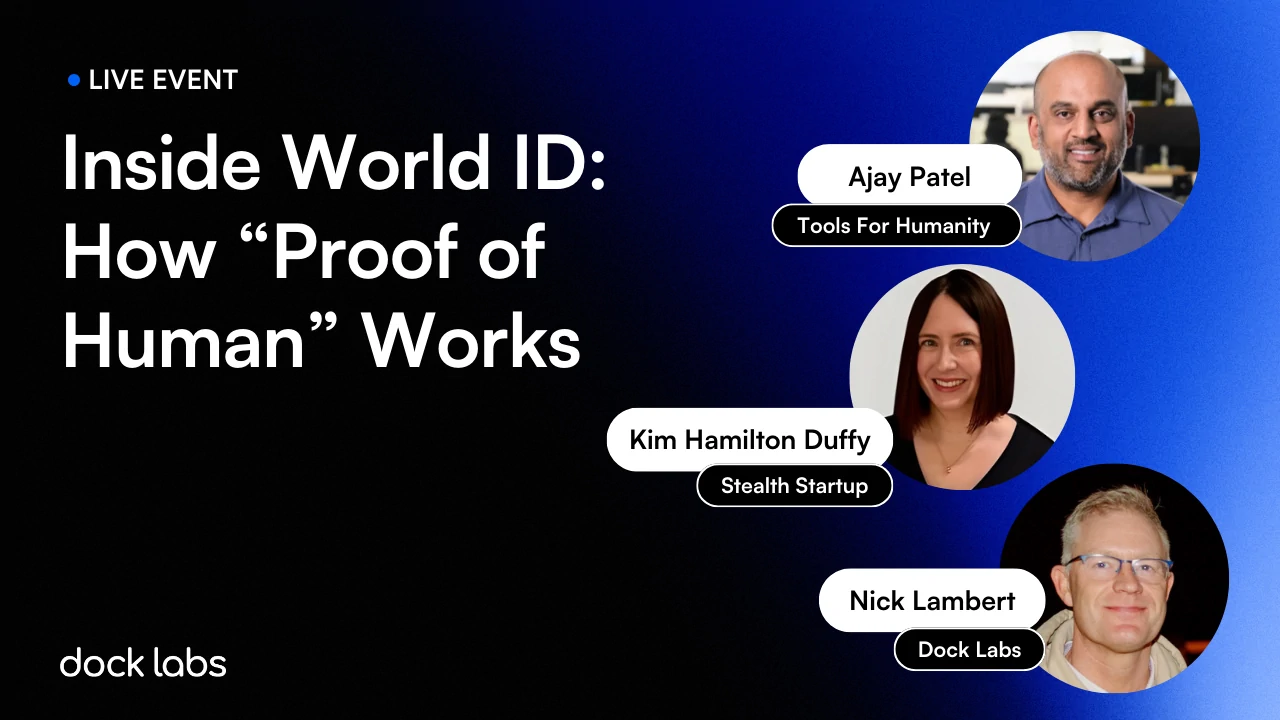

To unpack what’s really happening, and what the identity ecosystem needs to do next, we hosted a conversation with Ajay Patel, Head of World ID at Tools for Humanity, and Kim Hamilton Duffy, CEO of a stealth startup and former Executive Director of the Decentralized Identity Foundation.

The discussion explored the rising pressure created by AI-driven abuse, the risks of over-identification, the role biometrics can play when implemented carefully, and why interoperable, narrowly scoped credentials may be the path forward.

Below are the key takeaways from the session.

Why this topic is urgent now

- “Proof of human” is becoming a core internet requirement

- AI has crossed a “realism threshold,” driving scaled abuse: bots, fraud, impersonation, synthetic users, manipulation, and “farm” operations (hundreds of phones).

- The internet is shifting from “keep bots out” to “we may need to let some bots in” (agents), which forces new identity and permission models.

- Traditional bot defenses are breaking

- CAPTCHAs are increasingly solvable by AI and increasingly painful for real users (puzzles escalating to the point of deterring legitimate usage).

- A key risk: the “over-identification” backlash

- Platforms respond to bots by demanding more invasive checks (selfie + government ID) even when unnecessary.

- This expands surveillance and the blast radius of breaches: if more data exists, it can be leaked or repurposed (privacy-by-policy rather than privacy-by-design).

- The “agent wave” is outpacing infrastructure

- Agents moved from experimental to operational quickly.

- Deployment patterns often grant “ambient authority” (agents inherit secrets in the environment), while permissioning infrastructure is immature.

- Liability and accountability are unclear when agents transact on a user’s behalf.

Key framing: separate the use cases (don’t default to “government ID everywhere”)

- Different contexts require different assurance

- Regulated, high-stakes: banking, healthcare, government benefits → government identity may be appropriate and governed.

- Social/community contexts: may need “proof of human,” but not necessarily “unique human.”

- Basic abuse prevention: sometimes simple bot deterrence is enough.

- AI agents acting online: need “accountable delegation” more than “proof of human.”

- The principle advocated by Kim

- Match the credential/proof to the context: high-assurance, narrowly scoped credentials instead of “hand over your whole identity.”

World ID’s core problem statement (Ajay’s view)

- What changed

- Intelligence has scaled rapidly; abuse scales with it.

- The “more data” approach (KYC everywhere) is increasingly invasive and misapplied outside regulated contexts.

- What World ID aims to provide

- A new internet primitive to prove you’re a unique human online without revealing who you are.

- Designed to be usable by “my mom”:

- Unshareable (can’t easily be passed around)

- Hard to lose (recovery matters)

- If it’s shared, it should be explicit delegation (not accidental access leakage)

World ID architecture, as described

- Two layers

- The “proof-of-human credential”

- Represents uniqueness in a set of registered humans without identifying the individual within that set.

- The protocol layer

- In the World ID model Ajay described, there are three main roles that work together. First are issuers, which are the entities that create and sign credentials, for example, the system that issues the proof-of-human credential after biometric uniqueness is established. Second are authenticators, which Ajay noted are essentially wallet-like components controlled by the user. These authenticators store the user’s credentials and generate cryptographic proofs when the user needs to prove something about themselves. Third are relying parties, which are the services or applications that request and verify those proofs in order to make an access or risk decision (for example, confirming someone is human or meets an age requirement). Together, the model enables issuers to create trusted credentials, users to hold and present them via authenticators, and relying parties to verify claims without requiring the user to overshare their full identity.

- Focus: lifecycle + interoperability across issuers, authenticators, and relying parties.

- The “proof-of-human credential”

- Why biometrics

- It’s the only broadly available method that doesn’t depend on legal identity systems (given large populations without formal IDs).

- The goal is inclusivity: regardless of where you’re born or your documentation status.

The Orb + privacy-preserving uniqueness approach (Ajay’s explanation)

- Why build hardware (Orb)

- Performance + trust: needed a “trusted camera” that signs outputs and is harder to compromise.

- Why iris

- High entropy (rich signal), not like fingerprints/DNA in terms of “leaving traces everywhere.”

- Framed as: iris → “complex QR code” from which bits can be extracted.

- What the Orb does

- In-person, anonymous interaction.

- Checks: liveness, presence, humanness via on-device models + sensors (IR/RGB).

- Captures images → extracts an iris-derived code.

- How privacy is preserved (as described)

- World ID derives an iris code from the captured image and stores it in a privacy-preserving way using multi-party computation (MPC) and distributed storage. As Kim Hamilton Duffy noted, the system is designed not to retain raw biometrics but rather a derived representation intended to prevent reconstruction of the original iris image.

- The system answers: “Have we seen this code before?” to confirm uniqueness.

- Security intent: make reconstruction/compromise extremely hard (would require compromising many nodes globally).

- Even if an iris code leaked, it’s positioned as non-reversible back to a person’s actual iris image.

Biometrics: nuanced take (Kim’s view + discussion)

- Biometrics aren’t inherently “bad,” but governance matters

- Important distinction: biometrics stored locally (secure enclave) vs leaving the device / centralized storage.

- The hardest problems are often procedural and incentive-based, not purely technical:

- Voluntary enrollment vs coercion

- Realistic opt-out without losing access

- Whether onboarding is auditable and transparent

- Why the SSI community should engage

- Because biometrics are already ubiquitous; the question is how to enforce “good characteristics” (privacy, control, minimized data).

A repeated theme: stop oversharing identity attributes

- Ajay’s critique of current patterns

- Many identity flows start by demanding full identity attributes (name/ID) even when unnecessary.

- If a verifier only needs age, they should ask for an age proof, not your full identity.

- The alternative direction

- Selective disclosure / ZKPs / boolean proofs (prove what’s needed, nothing more).

- This reduces:

- Data risk for relying parties (they don’t want to become honeypots)

- Surveillance and linkability

- Organizational reality

- “More data” became the default because it improved risk decisions.

- Shifting to “more certainty via better signals” challenges internal incentives (teams built around collecting/analyzing more data).

The “missing primitive” and why standards matter

- Ajay’s claim

- We lack a root-layer identity primitive robust enough for a world with bots/agents.

- Document-based identity is spoofable; global issuers are fragmented; many governments don’t issue digital credentials.

- Kim’s emphasis

- Interoperability and substitutability require open standards:

- Switch wallets/vendors without rebuilding everything

- Compose proofs/credentials from different systems

- Interoperability and substitutability require open standards:

Proof-of-human as a complement to government credentials (emerging idea)

- Composite proofs

- Example discussed: a wallet that holds proof-of-human + an mDL could generate a combined proof (uniqueness + regulated attribute).

- Issuer-side value: fighting double issuance / double dipping

- Double issuance: one person gets multiple IDs.

- Double dipping: one person claims multiple benefits (trials, welfare, etc.).

- Concept: let issuers check a uniqueness signal (possibly via ZK proofs/tokenization) to trigger “deeper checks” only when needed.

Agent identity gap: what’s missing (Kim’s focus)

- We built identity systems for humans, not agents

- Need standards/mechanisms for:

- Delegation chains (who authorized the agent)

- Bounded permissions (least privilege)

- Auditability (what the agent did)

- Revocation (cut off agent authority quickly)

- Need standards/mechanisms for:

- Core principle

- Agents should have their own identifiers and credentials, not copies of the user’s identity.

Decentralization & key management: the hard product problems

- Ajay’s point

- Identity primitives must be recoverable: losing keys can’t mean losing your identity forever.

- That creates tension with decentralization (recovery without central control).

- World ID direction mentioned

- Architectural updates toward multi-issuer + multi-wallet / multi-authenticator.

- MPC-based approaches to enable recovery/credential portability while preserving protocol principles.

- Orb decentralization

- Goal: open-source designs/software, third-party audits, move toward community-operated governance so TFH becomes “a developer on the protocol,” not the controller.

Operational questions surfaced in Q&A: biometric change/expiry

- Biometrics change over time

- Iris patterns can change with age → implies refresh/re-issuance mechanisms.

- Protocol-level expiration

- They discussed building expiration concepts into the protocol so issuers can set rules and users can be notified to refresh.

- Step-up flows

- For high-risk relying party use cases: require “re-auth” via hardware interaction, then refresh the user’s custody package.

The “North Star” principles repeatedly stated

- Inclusive: workable for people without formal legal ID.

- Performant & low-friction: fast enough for real-world web-scale.

- Private & secure: minimize data, reduce honeypots, avoid linkability/surveillance.

- Interoperable: standardization + composability + substitutability.

- User-controlled: self-custody and local processing where possible; explicit delegation for sharing.

Practical takeaways for identity builders watching

- Don’t collapse every risk into “collect more ID”

- Treat “proof of human,” “uniqueness,” “age,” “citizenship,” “financial entitlement,” and “agent delegation” as distinct requirements.

- Design for “proofs” not “profiles”

- Ask for the minimal claim needed (boolean/attestation), especially outside regulated contexts.

- Plan for the hard parts

- Recovery, revocation, composability, governance, and “no phone home” properties are where systems succeed or fail at scale.